Amazon Bedrock provides a broad range of models from Amazon and third-party providers, including Anthropic, AI21, Meta, Cohere, and Stability AI, and covers a wide range of use cases, including text and image generation, embedding, chat, high-level agents with reasoning and orchestration, and more. Knowledge Bases for Amazon Bedrock allows you to build performant and customized Retrieval Augmented Generation (RAG) applications on top of AWS and third-party vector stores using both AWS and third-party models. Knowledge Bases for Amazon Bedrock automates synchronization of your data with your vector store, including diffing the data when it’s updated, document loading, and chunking, as well as semantic embedding. It allows you to seamlessly customize your RAG prompts and retrieval strategies—we provide the source attribution, and we handle memory management automatically. Knowledge Bases is completely serverless, so you don’t need to manage any infrastructure, and when using Knowledge Bases, you’re only charged for the models, vector databases and storage you use.

RAG is a popular technique that combines the use of private data with large language models (LLMs). RAG starts with an initial step to retrieve relevant documents from a data store (most commonly a vector index) based on the user’s query. It then employs a language model to generate a response by considering both the retrieved documents and the original query.

In this post, we demonstrate how to build a RAG workflow using Knowledge Bases for Amazon Bedrock for a drug discovery use case.

Overview of Knowledge Bases for Amazon Bedrock

Knowledge Bases for Amazon Bedrock supports a broad range of common file types, including .txt, .docx, .pdf, .csv, and more. To enable effective retrieval from private data, a common practice is to first split these documents into manageable chunks. Knowledge Bases has implemented a default chunking strategy that works well in most cases to allow you to get started faster. If you want more control, Knowledge Bases lets you control the chunking strategy through a set of preconfigured options. You can control the maximum token size and the amount of overlap to be created across chunks to provide coherent context to the embedding. Knowledge Bases for Amazon Bedrock manages the process of synchronizing data from your Amazon Simple Storage Service (Amazon S3) bucket, splits it into smaller chunks, generates vector embeddings, and stores the embeddings in a vector index. This process comes with intelligent diffing, throughput, and failure management.

At runtime, an embedding model is used to convert the user’s query to a vector. The vector index is then queried to find documents similar to the user’s query by comparing document vectors to the user query vector. In the final step, semantically similar documents retrieved from the vector index are added as context for the original user query. When generating a response for the user, the semantically similar documents are prompted in the text model, together with source attribution for traceability.

Knowledge Bases for Amazon Bedrock supports multiple vector databases, including Amazon OpenSearch Serverless, Amazon Aurora, Pinecone, and Redis Enterprise Cloud. The Retrieve and RetrieveAndGenerate APIs allow your applications to directly query the index using a unified and standard syntax without having to learn separate APIs for each different vector database, reducing the need to write custom index queries against your vector store. The Retrieve API takes the incoming query, converts it into an embedding vector, and queries the backend store using the algorithms configured at the vector database level; the RetrieveAndGenerate API uses a user-configured LLM provided by Amazon Bedrock and generates the final answer in natural language. The native traceability support informs the requesting application about the sources used to answer a question. For enterprise implementations, Knowledge Bases supports AWS Key Management Service (AWS KMS) encryption, AWS CloudTrail integration, and more.

In the following sections, we demonstrate how to build a RAG workflow using Knowledge Bases for Amazon Bedrock, backed by the OpenSearch Serverless vector engine, to analyze an unstructured clinical trial dataset for a drug discovery use case. This data is information rich but can be vastly heterogenous. Proper handling of specialized terminology and concepts in different formats is essential to detect insights and ensure analytical integrity. With Knowledge Bases for Amazon Bedrock, you can access detailed information through simple, natural queries.

Build a knowledge base for Amazon Bedrock

In this section, we demo the process of creating a knowledge base for Amazon Bedrock via the console. Complete the following steps:

- On the Amazon Bedrock console, under Orchestration in the navigation pane, choose Knowledge base.

- Choose Create knowledge base.

- In the Knowledge base details section, enter a name and optional description.

- In the IAM permissions section, select Create and use a new service role.

- For Service name role, enter a name for your role, which must start with

AmazonBedrockExecutionRoleForKnowledgeBase_. - Choose Next.

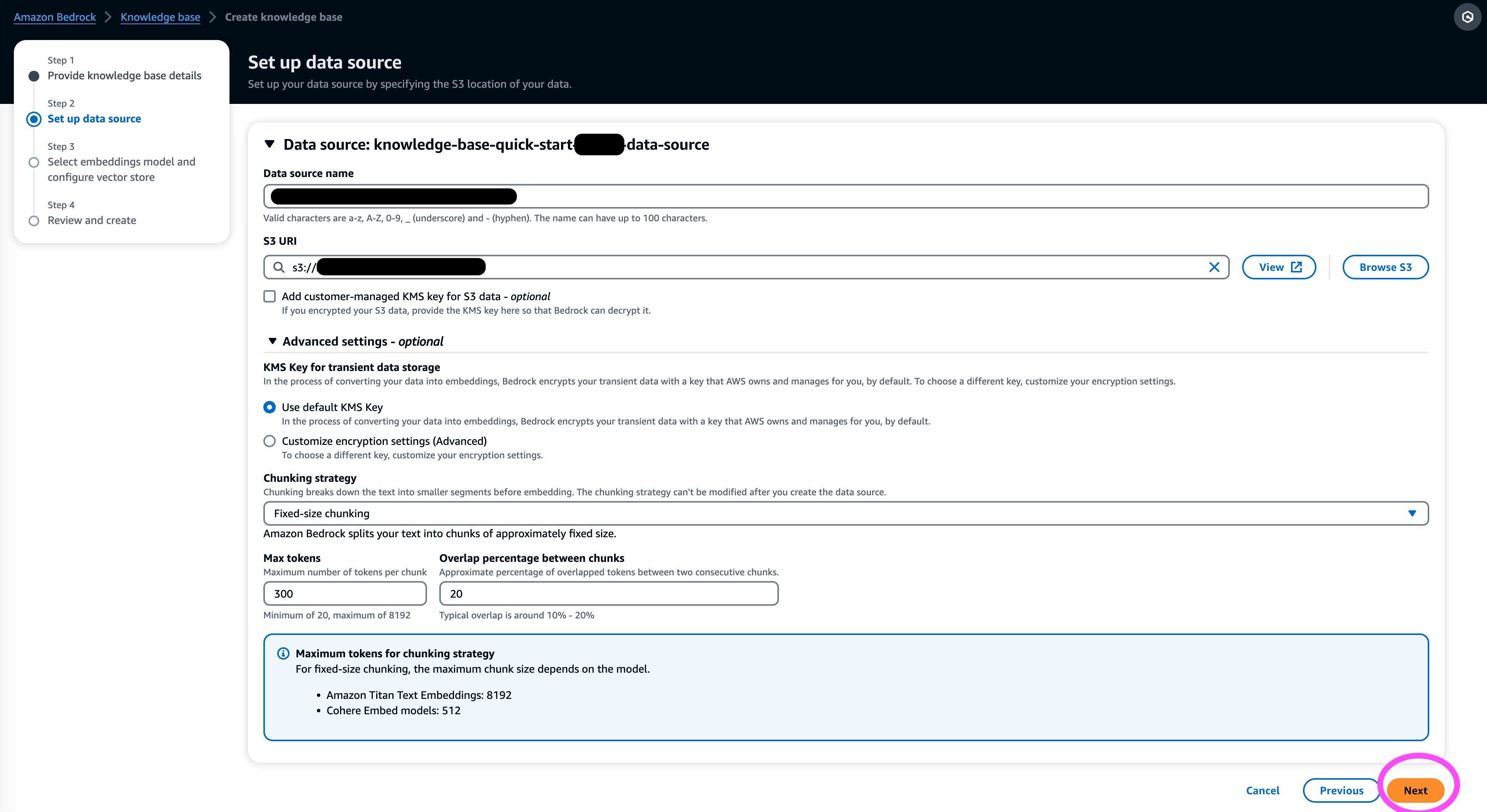

- In the Data source section, enter a name for your data source and the S3 URI where the dataset sits. Knowledge Bases supports the following file formats:

- Plain text (.txt)

- Markdown (.md)

- HyperText Markup Language (.html)

- Microsoft Word document (.doc/.docx)

- Comma-separated values (.csv)

- Microsoft Excel spreadsheet (.xls/.xlsx)

- Portable Document Format (.pdf)

- Under Additional settings¸ choose your preferred chunking strategy (for this post, we choose Fixed size chunking) and specify the chunk size and overlay in percentage. Alternatively, you can use the default settings.

- Choose Next.

- In the Embeddings model section, choose the Titan Embeddings model from Amazon Bedrock.

- In the Vector database section, select Quick create a new vector store, which manages the process of setting up a vector store.

- Choose Next.

- Review the settings and choose Create knowledge base.

- Wait for the knowledge base creation to complete and confirm its status is Ready.

- In the Data source section, or on the banner at the top of the page or the popup in the test window, choose Sync to trigger the process of loading data from the S3 bucket, splitting it into chunks of the size you specified, generating vector embeddings using the selected text embedding model, and storing them in the vector store managed by Knowledge Bases for Amazon Bedrock.

The sync function supports ingesting, updating, and deleting the documents from the vector index based on changes to documents in Amazon S3. You can also use the StartIngestionJob API to trigger the sync via the AWS SDK.

When the sync is complete, the Sync history shows status Completed.

Query the knowledge base

In this section, we demonstrate how to access detailed information in the knowledge base through straightforward and natural queries. We use an unstructured synthetic dataset consisting of PDF files, the page number of each ranging from 10–100 pages, simulating a clinical trial plan of a proposed new medicine including statistical analysis methods and participant consent forms. We use the Knowledge Bases for Amazon Bedrock retrieve_and_generate and retrieve APIs with Amazon Bedrock LangChain integration.

Before you can write scripts that use the Amazon Bedrock API, you’ll need to install the appropriate version of the AWS SDK in your environment. For Python scripts, this will be the AWS SDK for Python (Boto3):

Additionally, enable access to the Amazon Titan Embeddings model and Anthropic Claude v2 or v1. For more information, refer to Model access.

Generate questions using Amazon Bedrock

We can use Anthropic Claude 2.1 for Amazon Bedrock to propose a list of questions to ask on the clinical trial dataset:

Use the Amazon Bedrock RetrieveAndGenerate API

For a fully managed RAG experience, you can use the native Knowledge Bases for Amazon Bedrock RetrieveAndGenerate API to obtain the answers directly:

The cited information source can be obtained via the following code (with some of the output redacted for brevity):

By passing the session ID of the RetrieveAndGenerate API, you can preserve the conversation context and ask follow-up questions. For example, without the context, if you ask for more details from the previous answer, it may not be able to answer correctly:

But by passing the session ID, the RAG pipeline is able to identify the corresponding context and return relevant answers:

The following table shows the retrieved answers to all the corresponding questions.

| Question | Answer |

| What is the purpose of the study? Make sure you understand the goals of the research and what the study procedures will entail. | The purpose of the study is to test whether lithium is effective at preventing repeated suicidal self-directed violence in patients with depression or bipolar disorder. |

| What are the risks and potential benefits? The form should explain all foreseeable risks, side effects, or discomforts you might experience from participating. | The possible risks or discomforts include: the interview questions causing discomfort, side effects from the lithium medication such as nausea, loose stools, thirst, urination changes, shakiness, headaches, sweating, fatigue, decreased concentration, skin rash, thyroid changes, worsening acne/psoriasis, lithium toxicity, and risks if the medication is suddenly stopped. The potential benefits are that the tests may lead to new information to help the participant, and lithium may help prevent repeated suicidal self-directed violence for those with depression or bipolar disorder. |

| What will participation involve? Get details on what tests, medications, lifestyle changes, or procedures you will go through, how much time it will take, and how long the study will last. | Participation will involve completing an interview and questionnaires covering thinking, behaviors, mental health treatment, medications, alcohol and drug use, home and social supports, and understanding of the research study. This takes about two hours and can be done in multiple sessions, in person and by phone. If eligible for the full study, there will be about 20 study visits over one year. This will involve taking study medication, having vital signs checked, completing questionnaires, reviewing side effects, and continuing normal medical and mental health care. |

| Are there any costs or payments? Ask if you will be responsible for any costs related to the study or get paid for participating. | Yes, there are costs and payments discussed in the search results. You will not be charged for any treatments or procedures that are part of the study. However, you will still have to pay any usual VA co-payments for care and medications not related to the study. You will not be paid for participation, but the study will reimburse expenses related to participation like transportation, parking, etc. Reimbursement amounts and process are provided. |

| How will my privacy be protected? The form should explain how your personal health information will be kept confidential before, during, and after the trial. | Your privacy will be protected by conducting interviews in private, keeping written notes in locked files and offices, storing electronic information in encrypted and password protected files, and obtaining a Confidentiality Certificate from the Department of Health and Human Services to prevent disclosing information that identifies you. Information that identifies you may be shared with doctors responsible for your care or for audits and evaluations by government agencies, but talks and papers about the study will not identify you. |

Query using the Amazon Bedrock Retrieve API

To customize your RAG workflow, you can use the Retrieve API to fetch the relevant chunks based on your query and pass it to any LLM provided by Amazon Bedrock. To use the Retrieve API, define it as follows:

Retrieve the corresponding context (with some of the output redacted for brevity):

Extract the context for the prompt template:

Import the Python modules and set up the in-context question answering prompt template, then generate the final answer:

Query using Amazon Bedrock LangChain integration

To create an end-to-end customized Q&A application, Knowledge Bases for Amazon Bedrock provides integration with LangChain. To set up the LangChain retriever, provide the knowledge base ID and specify the number of results to return from the query:

Now set up LangChain RetrievalQA and generate answers from the knowledge base:

This will generate corresponding answers similar to the ones listed in the earlier table.

Clean up

Make sure to delete the following resources to avoid incurring additional charges:

Conclusion

Amazon Bedrock provides a broad set of deeply integrated services to power RAG applications of all scales, making it straightforward to get started with analyzing your company data. Knowledge Bases for Amazon Bedrock integrates with Amazon Bedrock foundation models to build scalable document embedding pipelines and document retrieval services to power a wide range of internal and customer-facing applications. We are excited about the future ahead, and your feedback will play a vital role in guiding the progress of this product. To learn more about the capabilities of Amazon Bedrock and knowledge bases, refer to Knowledge base for Amazon Bedrock.

About the Authors

Mark Roy is a Principal Machine Learning Architect for AWS, helping customers design and build AI/ML solutions. Mark’s work covers a wide range of ML use cases, with a primary interest in computer vision, deep learning, and scaling ML across the enterprise. He has helped companies in many industries, including insurance, financial services, media and entertainment, healthcare, utilities, and manufacturing. Mark holds six AWS Certifications, including the ML Specialty Certification. Prior to joining AWS, Mark was an architect, developer, and technology leader for over 25 years, including 19 years in financial services.

Mark Roy is a Principal Machine Learning Architect for AWS, helping customers design and build AI/ML solutions. Mark’s work covers a wide range of ML use cases, with a primary interest in computer vision, deep learning, and scaling ML across the enterprise. He has helped companies in many industries, including insurance, financial services, media and entertainment, healthcare, utilities, and manufacturing. Mark holds six AWS Certifications, including the ML Specialty Certification. Prior to joining AWS, Mark was an architect, developer, and technology leader for over 25 years, including 19 years in financial services.

Mani Khanuja is a Tech Lead – Generative AI Specialists, author of the book – Applied Machine Learning and High Performance Computing on AWS, and a member of the Board of Directors for Women in Manufacturing Education Foundation Board. She leads machine learning (ML) projects in various domains such as computer vision, natural language processing and generative AI. She helps customers to build, train and deploy large machine learning models at scale. She speaks in internal and external conferences such re:Invent, Women in Manufacturing West, YouTube webinars and GHC 23. In her free time, she likes to go for long runs along the beach.

Mani Khanuja is a Tech Lead – Generative AI Specialists, author of the book – Applied Machine Learning and High Performance Computing on AWS, and a member of the Board of Directors for Women in Manufacturing Education Foundation Board. She leads machine learning (ML) projects in various domains such as computer vision, natural language processing and generative AI. She helps customers to build, train and deploy large machine learning models at scale. She speaks in internal and external conferences such re:Invent, Women in Manufacturing West, YouTube webinars and GHC 23. In her free time, she likes to go for long runs along the beach.

Dr. Baichuan Sun, currently serving as a Sr. AI/ML Solution Architect at AWS, focuses on generative AI and applies his knowledge in data science and machine learning to provide practical, cloud-based business solutions. With experience in management consulting and AI solution architecture, he addresses a range of complex challenges, including robotics computer vision, time series forecasting, and predictive maintenance, among others. His work is grounded in a solid background of project management, software R&D, and academic pursuits. Outside of work, Dr. Sun enjoys the balance of traveling and spending time with family and friends.

Dr. Baichuan Sun, currently serving as a Sr. AI/ML Solution Architect at AWS, focuses on generative AI and applies his knowledge in data science and machine learning to provide practical, cloud-based business solutions. With experience in management consulting and AI solution architecture, he addresses a range of complex challenges, including robotics computer vision, time series forecasting, and predictive maintenance, among others. His work is grounded in a solid background of project management, software R&D, and academic pursuits. Outside of work, Dr. Sun enjoys the balance of traveling and spending time with family and friends.

Derrick Choo is a Senior Solutions Architect at AWS focused on accelerating customer’s journey to the cloud and transforming their business through the adoption of cloud-based solutions. His expertise is in full stack application and machine learning development. He helps customers design and build end-to-end solutions covering frontend user interfaces, IoT applications, API and data integrations and machine learning models. In his free time, he enjoys spending time with his family and experimenting with photography and videography.

Derrick Choo is a Senior Solutions Architect at AWS focused on accelerating customer’s journey to the cloud and transforming their business through the adoption of cloud-based solutions. His expertise is in full stack application and machine learning development. He helps customers design and build end-to-end solutions covering frontend user interfaces, IoT applications, API and data integrations and machine learning models. In his free time, he enjoys spending time with his family and experimenting with photography and videography.

Frank Winkler is a Senior Solutions Architect and Generative AI Specialist at AWS based in Singapore, focused in Machine Learning and Generative AI. He works with global digital native companies to architect scalable, secure, and cost-effective products and services on AWS. In his free time, he spends time with his son and daughter, and travels to enjoy the waves across ASEAN.

Frank Winkler is a Senior Solutions Architect and Generative AI Specialist at AWS based in Singapore, focused in Machine Learning and Generative AI. He works with global digital native companies to architect scalable, secure, and cost-effective products and services on AWS. In his free time, he spends time with his son and daughter, and travels to enjoy the waves across ASEAN.

Nihir Chadderwala is a Sr. AI/ML Solutions Architect in the Global Healthcare and Life Sciences team. His expertise is in building Big Data and AI-powered solutions to customer problems especially in biomedical, life sciences and healthcare domain. He is also excited about the intersection of quantum information science and AI and enjoys learning and contributing to this space. In his spare time, he enjoys playing tennis, traveling, and learning about cosmology.

Nihir Chadderwala is a Sr. AI/ML Solutions Architect in the Global Healthcare and Life Sciences team. His expertise is in building Big Data and AI-powered solutions to customer problems especially in biomedical, life sciences and healthcare domain. He is also excited about the intersection of quantum information science and AI and enjoys learning and contributing to this space. In his spare time, he enjoys playing tennis, traveling, and learning about cosmology.

- SEO Powered Content & PR Distribution. Get Amplified Today.

- PlatoData.Network Vertical Generative Ai. Empower Yourself. Access Here.

- PlatoAiStream. Web3 Intelligence. Knowledge Amplified. Access Here.

- PlatoESG. Carbon, CleanTech, Energy, Environment, Solar, Waste Management. Access Here.

- PlatoHealth. Biotech and Clinical Trials Intelligence. Access Here.

- Source: https://aws.amazon.com/blogs/machine-learning/use-rag-for-drug-discovery-with-knowledge-bases-for-amazon-bedrock/